The big argument: Calculators – phone or physical?

17 March 2025Turns out we did have them in our pockets when we're older

The big argument: Should we write another issue?

8 November 2024How much barrel is left to scrape?

The big argument: What’s the best way to end a proof?

20 May 2024QED or $\square$?

The big argument: Are there more fruit or doors?

6 December 2023Fruit... it's obviously fruit.

The big argument: Is maths discovered or created?

22 May 2023And who's responsible for my minus sign errors?

The big argument: Is Matlab better than Python?

9 November 2022Which do you prefer?

The big argument: Are whiteboards better than blackboards?

25 May 2022Board yet? You will be.

The big argument: Is dy/dx notation better than y’ or ẋ?

22 November 2021The Leibniz–Lagrange–Newton showdown we all want to see

The big argument: Is the Einstein summation convention worth it?

1 May 2021We hear arguments for and against this controversial convention

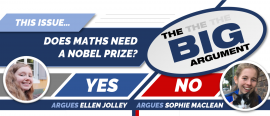

The big argument

30 October 2020Does maths need a Nobel prize?